Nail (pronounced neyl)

(1) A slender, typically rod-shaped rigid piece of metal,

usually in many lengths and thicknesses, having (usually) one end pointed and

the other (usually) enlarged or flattened, and used for hammering into or

through wood, concrete or other materials; in the building trades the most

common use is to fasten or join together separate pieces (of timber etc).

(2) In anatomy, a thin, horny plate, consisting of

modified epidermis, growing on the upper side of the end of a finger or toe; the

toughened protective protein-keratin (known as alpha-keratin, also found in

hair) at the end of an animal digit, such as fingernail.

(3) In zoology, the basal thickened portion of the

anterior wings of certain hemiptera; the basal thickened portion of the

anterior wings of certain hemiptera; the terminal horny plate on the beak of

ducks, and other allied birds; the claw of a mammal, bird, or reptile.

(4) Historically, in England, a round pedestal on which

merchants once carried out their business.

(5) A measure for a length for cloth, equal to 2¼ inches (57 mm) or 1⁄20 of an ell; 1⁄16 of a yard (archaic);

it’s assumed the origin lies in the use to mark that length on the end of a

yardstick.

(6) To fasten with a nail or nails; to hemmer in a nail.

(7) To enclose or confine (something) by nailing (often

followed by up or down).

(8) To make fast or keep firmly in one place or position

(also used figuratively).

(8) Perfectly to accomplish something (usually as “nailed

it”).

(9) In vulgar slang, of a male, to engage in sexual

intercourse with (as “I nailed her” or (according to Urban Dictionary: “I nailed

the bitch”).

(10) In law enforcement, to catch a suspect or find them

in possession of contraband or engaged in some unlawful conduct (usually as

“nailed them”).

(11) In Christianity, as “the nails”, the relics used in

the crucifixion, nailing Christ to the cross at Golgotha.

(12) As a the nail (unit), an archaic multiplier equal to

one sixteenth of a base unit

(13) In drug slang, a hypodermic needle, used for injecting

drugs.

(14) To detect and expose (a lie, scandal, etc)

(15) In slang, to hit someone.

(16) In slang, intently to focus on someone or something.

(17) To stud with or as if with nails.

Pre 900: From the Middle English noun nail & nayl, from the Old English nægl

and cognate with the Old Frisian neil,

the Old Saxon & Old High German nagal,

the Dutch nagel, the German Nagel, the Old Norse nagl (fingernail), all of which were

from the unattested Germanic naglaz. As a derivative, it was akin to the Lithuanian

nãgas & nagà (hoof), the Old Prussian nage

(foot), the Old Church Slavonic noga (leg,

foot), (the Serbo-Croatian nòga, the Czech

noha, the Polish noga and the Russian nogá,

all of which were probably originally a jocular reference to the foot as “a hoof”),

the Old Church Slavonic nogŭtĭ, the Tocharian

A maku & Tocharian B mekwa (fingernail, claw), all from the unattested

North European Indo-European ənogwh-. It was further akin to the Old Irish ingen, the Welsh ewin and the Breton ivin,

from the unattested Celtic gwhīnā,

the Latin unguis (fingernail, claw), from

the unattested Italo-Celtic əngwhi-;the

Greek ónyx (stem onych-), the Sanskrit ághri-

(foot), from the unattested ághli-; the

Armenian ełungn from the unattested onogwh-;the Middle English verbs naile, nail & nayle, the Old English næglian

and cognate with the Old Saxon neglian,

the Old High German negilen, the Old

Norse negla, from the unattested

Germanic nagl-janan (the Gothic was ganagljan). The ultimate source was the primitive Indo-European

h₃nog- (nail) and the use to describe the metal fastener was

from the Middle English naylen, from the Old English næġlan & nægl (fingernail (handnægl))

& negel (tapering metal pin),

from the Proto-Germanic naglaz

(source also of Old Norse nagl (fingernail)

& nagli (metal nail). Nail is a noun & verb, nailernailless

& naillike are adjectives, renail is a verbs, nailing is a noun & vern

and nailed is a verb & adjective; the noun plural is nails.

Nail is modified or used as a modifier in literally

dozens of examples including finger-nail, toe-nail, nail-brush, nail-file,

rusty-nail, garden-nail, nail-fungus, nail-gun & frost-nail. In idiomatic use, a “nail in one's coffin” is

a experience or event that tends to shorten life or hasten the end of something

(applied retrospectively (ie post-mortem) it’s usually in the form “final nail

in the coffin”. To be “hard as nails” is

either to be “in a robust physical state” or “lacking in human feelings or without

sentiment”. To “nail one's colors to the mast” is to declare one’s position on

something. Something described as “better

than a poke in the eye with a rusty nail” is a thing, which while not ideal, is

not wholly undesirable or without charm.

In financial matters (of payments), to be “on the nail” is to “pay at

once”, often in the form “pay on the nail”.

To “nail something down” is to finalize it. To have “nailed it” is “to

perfectly have accomplished something” while “nailed her” indicates “having

enjoyed sexual intercourse with her”. The “right” in the phrase “hit the

nail right on the head” is a more recent addition, all known instances of use

prior to 1700 being “hit the nail on the head” and the elegant original is much

preferred. It’s used to mean “correctly identify

something or exactly to arrive at the correct answer”. Interestingly, the Oxford English Dictionary

(OED) notes there is no documentary evidence that the phrase comes from “nail”

in the sense of the ting hit by a hammer.

The sense of “fingernail” appears to be the original which makes sense give there were fingernails before there were spikes (of metal or any other material) used to build stuff. The verb nail was from the Old English næglian (to fix or fasten (something) onto (something else) with nails), from the Proto-Germanic ganaglijan (the source also of the Old Saxon neglian, the Old Norse negla, the Old High German negilen, the German nageln and the Gothic ganagljan (to nail), all developed from the root of the nouns. The colloquial meaning “secure, succeed in catching or getting hold of (someone or something)” was in use by at least the 1760; hence (hence the law enforcement slang meaning “to effect an arrest”, noted since the 1930s. The meaning “to succeed in hitting” dates from 1886 while the phrase “to nail down” (to fix in place with nails) was first recorded in the 1660s.

Colors: Lindsay Lohan with nails unadorned and painted.

As a noun, “nail-biter” (worrisome or suspenseful event), perhaps surprisingly, seems not to have been in common use until 1999 an it’s applied to things from life-threatening situations to watching close sporting contests. The idea of nail-biting as a sign of anxiety has been in various forms of literature since the 1570s, the noun nail-biting noted since 1805 and as a noun it was since the mid-nineteenth century applied to those individuals who “habitually or compulsively bit their fingernails” although this seems to have been purely literal rather than something figurative of a mental state. Now, a “nail-biter” is one who is “habitually worried or apprehensive” and they’re often said to be “chewing the ends of their fingernails” and in political use, a “nail biter” is a criticism somewhat less cutting than “bed-wetter”. The condition of compulsive nail-biting is the noun onychophagia, the construct being onycho- (a creation of the international scientific vocabulary), reflecting a New Latin combining form, from the Ancient Greek ὄνυξ (ónux) (claw, nail, hoof, talon) + -phagia (eating, biting or swallowing), from the Ancient Greek -φαγία (-phagía). A related form was -φαγος (-phagos) (eater), the suffix corresponding to φαγεῖν (phageîn) (to eat), the infinitive of ἔφαγον (éphagon) (I eat), which serves as aorist (essentially a compensator for sense-shifts) (for the defective verb ἐσθίω (esthíō) (I eat). Bitter-tasting nail-polish is available for those who wish to cure themselves. Nail-polish as a product dates from the 1880s and was originally literally a clear substance designed to give the finger or toe-nails a varnish like finish upon being buffed. By 1884, it was being sold as “liquid nail varnish” including shads of black, pink and red although surviving depictions in art suggests men and women in various cultures have for thousands of years been coloring their nails. Nail-files (small, flat, single-cut file for trimming the fingernails) seem first to have been sold in 1819 and nail-clippers (hand-tool used to trim the fingernails and toenails) in 1890.

Francis (1936-2025; pope 2013-2025) at the funeral of Cardinal George Pell (1941-2023), St Peter’s Basilica, the Vatican, January 2023.

The expression "nail down the lid" is a reference to the lid of a coffin (casket), the implication being one wants to make doubly certain anyone within can't possible "return from the dead". The noun doornail (also door-nail) (large-headed nail used for studding batten doors for strength or ornament) emerged in the late fourteenth century and was often used of many large, thick nails with a large head, not necessarily those used only in doors. The figurative expression “dead as a doornail” seems to be as old as the piece of hardware and use soon extended to “dumb as a doornail” and “deaf as a doornail). The noun hangnail (also hang-nail) is a awful as it sounds and describes a “sore strip of partially detached flesh at the side of a nail of the finger or toe” and appears in seventeenth century texts although few etymologists appear to doubt it’s considerably older and probably a folk etymology and sense alteration of the Middle English agnail & angnail (corn on the foot), from the Old English agnail & angnail. The origin is likely to have been literally the “painful spike” in the flesh when suffering the condition. The first element was the Proto-Germanic ang- (compressed, hard, painful), from the primitive Indo-European root angh- (tight, painfully constricted, painful); the second the Old English nægl (spike), one of the influences on “nail”. The noun hobnail was a “short, thick nail with a large head” which dates from the 1590s, the first element probably identical with hob (rounded peg or pin used as a mark or target in games (noted since the 1580s)) of unknown origin. Because hobnails were hammered into the leather soles of heavy boots and shoes, “hobnail” came in the seventeenth century to be used of “a rustic person” though it was though less offensive than forms like “yokel”.

Nails and pins

As designs, a nail and a pin are similar, obviously differing only in scale but the function of each is different. A nail’s primary purpose is to function as a structural fastener joining materials (most typically two or more pieces of wood) although there are specialized nails driven into substrate by impact (variously with hammers or nail guns (sometimes called “pin-nailers”, some of which are built to fire “panel pins” (very slender nails) or small “headless nails”). A nail relies on friction and compression in the surrounding material for its holding strength. Pins look like scaled-down nails but mostly are used for alignment, retention or pivoting, rather than structural load-bearing. Because of their more delicate construction, pins often are inserted through specific-purpose, pre-existing holes and in many cases are intended to be temporary and are thus removable. Visually, both nails and pins have heads (round, flat, clipped etc) and a tapered shank with a tip pointed for pointed tip for penetration (“snub-nosed” nails do exist but are rare) and both are designed slightly to deform the surrounding material when driven. The most obvious difference is that a pin’s head is very small and some are spherical and made from plastic; they’re designed only to be pushed with finger-pressure rather than being hit with a hammer. Although the term “pin” is used for some specialized devices used in building and engineering (dowel pin, pivot pin, gudgeon pin (also as wrist pin), roll pin, cotter pin etc), the word is most associated with the tailor’s pin (used mostly in textiles and usually clipped to “pin”). In jewelry design and textiles there are also variants including the “lapel pin” and the fashion industry’s device of last resort, the “safety pin”.

Clive Barker's (b 1952) supernatural horror movie Hellraiser (1987) was based on his novella The Hellbound Heart (1986) and was a surprise hit, making it a franchise which has thus far spawned nine sequels, the most recent released in 2022. The plot involved a mysterious puzzle box that, when opened, summoned the Cenobites, a group of extra-dimensional, sadomasochistic beings unable to differentiate between pain and pleasure. It was a good premise for a horror movie but the character who really captured the audience's imagination was the unnamed figure viewers dubbed “Pinhead”. Although Pinhead appeared in the original film for fewer than ten minutes, the character became the franchise’s focal point and has since dominated the publicity material for subsequent releases. The popularity of Hellraiser has been maintained and it’s hoped that for the next release the producers will offer the part to Peter Dutton (b 1970; leader of the Liberal Party of Australia 2022-2025).

Peter Dutton captured by a photographer during a happy moment (left), Pinhead with the box able to summon the Cenobites (centre) and and artist's depiction of Mr Dutton in “Pinhead mode” (digitally altered image, right).

No longer burdened with tiresome parliamentary duties since losing his seat in the 2025 Australian general election, Mr Dutton has time for a third career and he should be good at playing an unsmiling character who speaks in a relentless monotone; really, all he need do is act naturally. It’s suspected also he’ll be good at learning a script given the decades he spent parroting “talking points” and TWS (three word slogans). While it’s an urban myth Mr Dutton wasn’t offered the part of Lord Voldemort in the Harry Potter movie franchise because he was deemed “too scary”, as Pinhead he’d be “just scary enough”. While the LNP (Liberal National Party) state government in Queensland recently has appointed Mr Dutton to the board of the QIC (Queensland Investment Corporation, the investment manager of the state’s Aus$135 billion in assets), it’s understood his duties in the Aus$130,000 per annum role will be neither onerous or time-consuming so there’ll be ample opportunity for film-shoots. Although when in opposition the LNP had decried the ALP (Australian Labor Party) government’s frequent appointment of ALP figures to lucrative sinecures, once in office the LNP continued the “jobs for the boys” tradition. In the modern era, the two most striking characteristics of right-wing fanatics is (1) a fondness for sitting safely in a bunker while advocating for (and sometimes sending) other people's children to go a fight a war somewhere and (2) after a career spent extolling the virtues of “private enterprise” and criticizing “government waste”, being anxious to get back on the public payroll as soon as their political careers end. Reassuringly for taxpayers who may have been worried Mr Dutton would not be able to continue to enjoy the lifestyle to which their taxes made him accustomed (“entitled” as he might have put it), it’s believed his director’s fees from QIC will not affect his parliamentary pension (understood to be between Aus$260,000-Aus$280,000 per annum).

The Buick Nailhead

In the 1930s, the straight-8 became a favorite for

manufacturers of luxury cars, attracted by its ease of manufacture (components

and assembly-line tooling able to be shared with those used to produce a straight-6), the

mechanical smoothness inherent in the layout and the ease of maintenance

afforded by the long, narrow configuration, ancillary components readily accessible.

However, the limitations were the relatively slow engine speeds imposed

by the need to restrict the “crankshaft flex” and the height of the units, a

product of the long strokes used to gain the required displacement. By the 1950s, it was clear the future lay in

big-bore, overhead valve V8s although the Mercedes-Benz engineers, unable to

forget the glory days of the 1930s when the straight-eight W125s built for the

Grand Prix circuits generated power and speed Formula One wouldn’t see

until the 1980s, noted the relatively small 2.5 litre (153 cubic inch)

displacement limit for 1954 and conjured up a final fling for the

layout. Used in both Formula One as the

W196R and in sports car racing as the W196S (better remembered as the 300 SLR) the

new 2.5 & 3.0 litre (183 cubic inch) straight-8s, unlike their pre-war predecessors, solved the issue of crankshaft flex (the W196's redline was 9500 compared with the W125's 5800) by locating the power take-off at the centre, adding mechanical fuel-injection

and a desmodromic valve train to make the things an exotic cocktail of ancient

& modern (on smooth racetracks and in the hands of skilled drivers, the swing axles at the back not the liability they might sound). Dominant during 1954-1955

in both Formula One & the World Sports Car Championship, they were the last of

the straight-8s in top-line competition.

Across the Atlantic, the US manufacturers also abandoned their straight-8s. Buick introduced their overhead valve (OHV) V8 in 1953 but, being much wider than before, the new engine had to be slimmed somewhere to fit between the existing inner-fenders (it would not be until later the platform was widened). To achieve this, the engineers narrowed the cylinder heads, compelling both a conical (the so-called “pent-roof”) combustion chamber and an arrangement in which the sixteen valves pointed directly upwards on the intake side, something which not only demanded an unusual pushrod & rocker mechanism but also limited the size of the valves. So, the valves had to be tall and narrow and, with some resemblance to nails, they picked up the nickname “nail valves”, morphing eventually to “nailhead” as a description of the whole engine. The valve placement and angle certainly benefited the intake side but the geometry compromised the flow of exhaust gases which were compelled by their anyway small ports to make a turn of almost 180o on their way to the tailpipe. As an indication of the heat-soak generated by that 180o turn, the surrounding water passages were very wide.

It wasn't the last time the head design of a Detroit V8 would be dictated by considerations of width. When Chrysler in 1964 introduced the 273 cubic inch (4.5 litre) V8 as the first of its LA-Series (that would begat the later 318 (5.2), 340 (5.5) & 360 (5.9) as well as the V10 made famous in the Dodge Viper), the most obvious visual difference from the earlier A-Series V8s was the noticeably smaller cylinder heads. The A engines used as skew-type valve arrangement in which the exhaust valve was parallel to the bore with the intake valve tipped toward the intake manifold (the classic polyspherical chamber). For the LA, Chrysler rendered all the valves tipped to the intake manifold and in-line (as viewed from the front), the industry’s standard approach to a wedge combustion chamber. The reason for the change was that the decision had been taken to offer the compact Valiant with a V8 but it was a car which had been designed to accommodate only a straight-six and the wide-shouldered polyspheric head A-Series V8s simply wouldn’t fit. So, essentially, wedge-heads were bolted atop the old A-Series block but the “L” in LA stood for light and the engineers wanted something genuinely lighter for the compact (in contemporary US terms) Valiant. Accordingly, in addition to the reduced size of the heads and intake manifold, a new casting process was developed for the block (the biggest, heaviest part of an engine) which made possible thinner walls. "Light" is however a relative term and the LA series was notably larger and heavier than Ford's "Windsor" V8 (1961-2000) which was the exemplar of the "thin-wall" technique. This was confirmed in 1967 when, after taking control of Rootes Group, Chrysler had intended to continue production of the Sunbeam Tiger, by then powered by the Ford Windsor 289 (4.7 litre) but with Chrysler’s 273 LA V8 substituted. Unfortunately, while 4.7 Ford litres filled it to the brim, 4.4 Chrysler litres overflowed; the Windsor truly was compact. Allowing it to remain in production until the stock of already purchased Ford engines had been exhausted, Chrysler instead changed the advertising from emphasizing the “…mighty Ford V8 power plant” to the vaguely ambiguous “…an American V-8 power train”.

322 cubic inch Nailhead in 1953 Buick Skylark convertible (left) and 425 cubic inch Nailhead in 1966 Buick Riviera GS (with dual-quad MZ package, right). Note the “Wildcat 465” label on the air cleaner, a reference to the claimed torque rating, something most unusual, most manufacturers using the space to advertise horsepower or cubic inch displacement (CID).

The nailhead wasn’t ideal for producing top-end power but the design did generate prodigious low-end torque, something much appreciated by Buick's previous generation of buyers who much had relished the low-speed responsiveness of the famously smooth straight-8. However, like everybody else, Buick hadn’t anticipated that as the 1950s unfolded, the industry would engage in a “power race”, something to which the free-breathing Cadillac V8s and Chrysler’s Hemis were well-suited. For that, the somewhat strangulated Buick Nailhead was not at all suited and to gain power the engineers were compelled to add high-lift, long-duration camshafts which enabled the then magic 300 HP (horsepower) number to be achieved but at the expense of smoothness; tales of Buick buyers (long accustomed to straight-8s that ran so smoothly at idle it could be hard to tell if the things were running) returning to the dealer to fix the “rumpity-rump” became legion. Still, the Nailhead was robust, relatively light and offered what was then a generous displacement and the ever inventive hot-rod community soon worked out the path to power was to use forced induction and invert the valve use, the supercharger blowing the fuel-air mix into the combustion chambers through the exhaust ports while the exhaust gases were evacuated through the larger intake ports. Thus, for a while, the Nailhead enjoyed a role as a niche player although the arrival in the mid 1950s of the much more tuneable Chevrolet V8s ended the vogue for all but a few devotees who continued use well into the 1960s. Buick acknowledged reality and, unusually, instead of following the industry trend and drawing attention to displacement & power, publicized their big torque numbers, confusing some (though probably not Buick buyers who were a loyal crew who sometimes would look down on more expensive Cadillacs because they were "flashy"). The unique appearance of the old Nailhead retains some nostalgic appeal for the modern hot-rod community and they do sometimes appear, a welcome change from the more typical small-block Fords or Chevrolets.

Not confused about numbers was the USAF (United States Air Force)

which was much interested in power for its aircraft but also had a special need

for torque on the tarmac and briefly that meant another quirky niche for the Nailhead. The Lockheed SR-71 Blackbird (1964-1979) was

a long-range, high-altitude supersonic (Mach 3+) aircraft used by the USAF for reconnaissance between 1966-1998 and by the NASA (National Aeronautics &

Space Administration) for observation missions as late as 1999. Something of a high-water mark among the

extraordinary advances made in aeronautics and materials construction during

the Cold War, the SR-71 used Pratt & Whitney J58 turbojet

engines which featured an innovative, secondary air-injection system for the

afterburner, permitting additional thrust at high speed. The SR-71 still holds a number of altitude

and speed records and Lockheed’s SR-72, a hypersonic unmanned aerial vehicle

(UAV) is said to be in an “advanced stage” of design and construction although

whether any test flights will be conducted before 2030 remains unclear, the

challenges of sustaining in the atmosphere velocities as high as Mach 6+

onerous given the heat generated and stresses imposed by the the fluid dynamics of air at high speed.

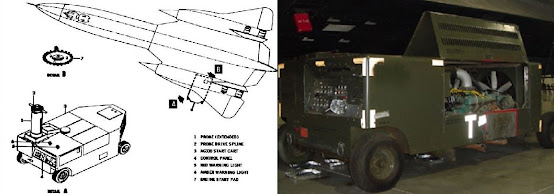

Drawing from user manual for AG330 starter cart (left) and AG330 starter cart with dual Buick Nailhead V8s (right).

At the time, the SR-71 was the most

exotic aircraft on the planet but during testing and early in its career, just for the engines to start it relied on a pair of even then technologically bankrupt Buick Nailhead V8s.

These were mounted in a towed cart and were effectively the turbojet’s

starter motor, a concept developed in the 1930s as a work-around for the

technology gap which emerged as the V12 aero-engines became too big to start by hand

but lacked on-board electrical systems to trigger ignition. The two Nailheads were connected by gears to

a single, vertical drive shaft which ran the jet up to the critical speed at

which ignition became self-sustaining.

The engineers chose the Nailheads after comparing them to other large

displacement V8s, the aspect of the Buicks which most appealed being the torque

generated at relatively low engine speeds, a characteristic ideal for driving

an output shaft, torque best visualized as a "twisting" force. After the Nailhead was

retired in 1966, later carts used Chevrolet big-block V8s but in 1969 a pneumatic

start system was added to the infrastructure of the USAF bases from which the

SR-71s most frequently operated, the sixteen-cylinder carts relegated to

secondary fields the planes rarely used.