Noon (pronounced

noon)

(1) Midday; twelve o'clock in the daytime or the

time or point at which the sun crosses the local meridian (the time of day when the sun is in

its zenith).

(2) Figuratively

(usually in literary or poetic use), the highest, brightest, or finest point or

part; culmination; capstone; apex.

(3)

The corresponding time in the middle of the night; midnight (archaic but

historic use means old documents with the word must be read with care, entries

appearing as both “noon” & “noon of the night”).

(4) Three o’clock in the afternoon (archaic).

(5)

To relax or sleep around midday (as “to noon” “nooning” or “nooned”) (archaic).

(6)

The letter ن in Arabic script.

(7)

Midday meal (archaic).

Pre 900: From the Middle English noen, none & non, from the Old

English nōn (the ninth hour), from a

Germanic borrowing of the Classical Latin nōna

(ninth hour) (short for nōna hōra),

the feminine. singular

of nonus (ninth), contracted from novenos, from novem (nine). It was

cognate with the Dutch noen, the (obsolete)

German non and the Norwegian non.

Synonyms (some archaic) include apex, capstone, meridian, midday,

noontide, noonday, noontime, nones (the ninth

hour of daylight), midpoint (of the day), & twelve. Descendants include the Modern English none

and the Scots nane (none), Noon the

proper noun enduring as a surname. Noun

is a noun and noons, nooning & nooned are verbs; the noun plural is noons.

Although

derived from the Latin word for the number nine, the English word noon

refers to midday, the time when the sun reaches the meridian. The Romans however counted the hours of the

day from sunrise which, for consistency, was declared for this purpose to be

06:00; the ninth hour (nona hora) was

thus 15:00. The early Christians adopted

Jewish customs of praying at certain hours and when Christian monastic orders

formed, the ecclesiastical

reckoning of the daily timetable was structured around the hours for

prayer. In the earliest schedules, the

monks prayed at three-hour intervals: 6-9 pm, 9 pm-midnight, midnight-3 am and

3-6 am. The prayers are known as the

Divine Office and the times at which they are to be recited are the canonical

hours:

Vigils: night

Matins: dawn

Lauds: dawn

Prime: 6 am (first hour)

Terce: 9 am (third hour)

Sext: noon (sixth hour)

None: 3 pm (ninth hour)

Vespers: sunset

Compline: before bed

The shift in the common meaning of noon from 3 pm to 12 noon began in the twelfth century when the prayers said at the ninth hour were set back to the sixth, the reasoning practical rather theological, the unreliability of medieval time-keeping devices and the seasonal elasticity of the hours of daylight in northern regions meaning it was easier to standardise on an earlier hour. Additionally, in monasteries and on holy days, fasting ended at nones, which perhaps offered another administrative incentive to nudge it up the clock. An alternative explanation offered by social historians is that it was simply the abbots deciding to align their noon meal with those taken in the towns and villages, the Old English word non having assumed the meaning “midday” or “midday meal” by circa 1140. Whatever the reason, the meaning shift from "ninth hour" to "sixth hour" seems to have been complete by the fourteenth century, the same path of evolution as the Dutch noen). Noon is an example of what etymologists call a fossil word, one which that embeds customs of former ages.

The

use as a synonym for midnight existed between the seventeenth & nineteenth

centuries, apparently because the poetic phrase “noon of the night” entered

popular use. The noun forenoon (the

morning (ie (be)fore + noon)) applied especially the latter part of it, those

hours “when business is done”, the word emerging circa 1500. The noun noonday (middle of the day) was

first used by Myles Coverdale (1488–1569), the English cleric and

ecclesiastical reformer remembered for his printed translation of the Bible

into English (1535) and it was used as an adjective from 1650s. In the Old English there had been non tid (noon-tide, midday, noon) and non-tima (noon, noon-time, midday). The noun afternoon (part of the day from noon

to evening) dates from circa 1300 and it was subject to an interesting shift in

grammatical form. In the fifteenth &

sixteenth centuries it was used as “at afternoon” but from circa 1600 this

shifted to “in the afternoon”; it emerged as an adjective from the 1570s. In the Middle English there had been the

mid-fourteenth century aftermete (afternoon,

part of the day following the noon meal).

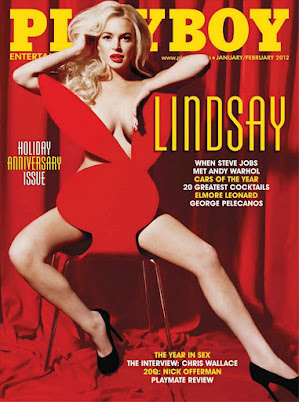

Lindsay Lohan at nuncheon, Scott's Restaurant, Mayfair, London, 2015.

The noun nuncheon was from the mid-fourteenth century nōn-schench (slight refreshment of food (with or without liquor) taken at midday, the name shifting with the meal, nuncheon taken originally in the afternoon (ie notionally the three o’clock meal), the construct being none (noon) + shench (draught, cup), from the Old English scenc, related to scencan (to pour out, to give to drink) and cognate with the Old Frisian skenka (to give to drink) and the German & Dutch schenken (to give). The most obvious descendent of nuncheon is luncheon (and thus lunch).

Lāhainā Noon is the solar phenomenon (known only in the tropics) when the Sun culminates at the zenith at solar noon, passing directly overhead, thus meaning objects underneath cast no shadow, creating a effect something like the primitive graphics in some video games. The name Lāhainā Noon (Lāhainā Noons the plural) was the winner in a contest organised by Hawai'i's Bishop Museum in 1990, the museum noting the word lāhainā (originally lā hainā) may be translated into English as “cruel sun” but makes reference also to the severe droughts experienced in that part of the island of Maui. The old Hawai'ian name for the event was the much more pleasing kau ka lā i ka lolo (the sun rests on the brains).