Algorithm (pronounced al-guh-rith-um)

(1) A

set of rules for solving a problem in a finite number of steps.

(2) In

computing, a finite set of unambiguous instructions performed in a prescribed

sequence to achieve a goal, especially a mathematical rule or procedure used to

compute a desired result.

(3) In

mathematics and formal logic, a recursive procedure whereby an infinite

sequence of terms can be generated.

1690s:

From the Middle English algorisme

& augrym, from the Anglo-Norman algorisme & augrimfrom, from the French algorithme,

re-fashioned (under mistaken connection with Greek αριθμός (arithmos) (number)) from the Old French algorisme (the Arabic numeral system)

from the Medieval Latin algorismus, a

(not untypical) mangled transliteration of the Arabic الخَوَارِزْمِيّ (al-ḵawārizmiyy), the nisba (the part of an Arabic name consisting a derivational

adjective) of the ninth century Persian mathematician Muḥammad

ibn Mūsā al-Khwārizmī and a toponymic name meaning “person from Chorasmia” (native

of Khwarazm (modern Khiva in Uzbekistan)).

It was Muḥammad ibn Mūsā al-Khwārizmī works which

introduced to the West some sophisticated mathematics (including algebra). The

earlier form in Middle English was the thirteenth century algorism from the Old

French and in English, it was first used in about 1230 and then by the English

poet Geoffrey Chaucer (circa 1344-1400) in 1391. English adopted the French term, but it

wasn't until the late nineteenth century that algorithm began to assume its

modern sense. Before that, by 1799, the adjective

algorithmic (the construct being algorithm + -ic) was in use and the first use

in reference to symbolic rules or language dates from 1881. The suffix -ic was from the Middle English

-ik, from the Old French -ique, from the Latin -icus, from the primitive Indo-European

-kos & -ḱos, formed with the i-stem suffix -i- and the

adjectival suffix -kos & -ḱos. The form existed also in the Ancient Greek as

-ικός (-ikós), in Sanskrit as -इक

(-ika) and the Old Church Slavonic as

-ъкъ (-ŭkŭ); A doublet of -y. In European languages, adding -kos to noun stems carried the meaning

"characteristic of, like, typical, pertaining to" while on adjectival

stems it acted emphatically; in English it's always been used to form

adjectives from nouns with the meaning “of or pertaining to”. A precise technical use exists in physical

chemistry where it's used to denote certain chemical compounds in which a

specified chemical element has a higher oxidation number than in the equivalent

compound whose name ends in the suffix -ous; (eg sulphuric acid (H₂SO₄)

has more oxygen atoms per molecule than sulphurous acid (H₂SO₃). The

noun algorism, from the Old French algorisme

was an early alternative form of algorithm; algorismic was a related form.

The meaning broadened to any method of computation and from the mid

twentieth century became especially associated with computer programming to the

point where, in general use, this link is often thought exclusive. The spelling algorism has been obsolete since

the 1920s. Algorithm, algorithmist,

algorithmizability, algorithmocracy, algorithmization & algorithmics are

nouns, algorithmize is a verb, algorithmic & algorithmizable are adjectives

and algorithmically is an adverb; the noun plural is algorithms.

Babylonian and later algorithms

An early Babylonian algorithm in clay.

Although there is evidence multiplication algorithms existed in Egypt (circa 1700-2000 BC), a handful of Babylonian clay tablets dating from circa 1800-1600 BC are the oldest yet found and thus the world's first known algorithm. The calculations described on the tablets are not solutions to specific individual problems but a collection of general procedures for solving whole classes of problems. Translators consider them best understood as an early form of instruction manual. When translated, one tablet was found to include the still familiar “This is the procedure”, a phrase the essence of every algorithm. There must have been many such tablets but there's a low survival rate of stuff from 40 centuries ago not regarded as valuable.

So associated with computer code has the word "algorithm" become that it's likely a goodly number of those hearing it assume this was its origin and any instance of use happens in software. The use in this context, while frequent, is not exclusive but the general perception might be it's just that. It remains technically correct that almost any set of procedural instructions can be dubbed an algorithm but given the pattern of use from the mid-twentieth century, to do so would likely mislead or confuse confuse many who might assume they were being asked to write the source code for software. Of course, the sudden arrival of mass-market generative AI (artificial intelligence) has meant anyone can, in conversational (though hopefully unambiguous) text, ask their tame AI bot to produce an algorithm in the syntax of the desired coding language. That is passing an algorithm (using the structures of one language) to a machine which interprets the text and converts it to language in another structure, something programmers have for decades been doing for their clients.

A much-distributed general purpose algorithm (really more of a flow-chart) which seems so universal it can be used by mechanics, programmers, lawyers, physicians, plumbers, carpet layers, concreting contractors and just about anyone whose profession is object or task-oriented.

The AI bots have proved especially adept at such tasks. While a question such as: "What were the immediate implications for Spain of the formation of the Holy Alliance?" produces varied results from generative AI which seem to range from the workmanlike to the inventive, when asked to produce computer code the results seem usually to be in accord with a literal interpretation of the request. That shouldn't be unexpected; a discussion of early nineteenth century politics in the Iberian Peninsular is by its nature going to to be discursive while the response to a request for code to locate instances of split infinitives in a text file is likely to vary little between AI models. Computer languages of course impose a structure where syntax needs exactly to conform to defined parameters (even the most basic of the breed such as that PC/MS-DOS used for batch files was intolerant of a single missing or mis-placed character) whereas something like the instructions to make a cup of tea (which is an algorithm even if not commonly thought of as one) greatly can vary in form even though the steps and end results can be the same.

The

so-called “rise of the algorithm” is something that has attracted much comment

since social media gained critical mass; prior to that algorithms had been used

increasingly in all sorts of places but it was the particular intimacy social

media engenders which meant awareness increased and perceptions changed. The new popularity of the word encouraged the

coining of derived forms, some of which were originally (at least to some

degree) humorous but beneath the jocularity, many discovered the odd

truth. An algorithmocracy describes a “rule

by algorithms”, a critique in political science which discusses the

implications of political decisions are being made by algorithms, something

which in theory would make representative and responsible government not so

much obsolete as unnecessary. Elements

of this have been identified in the machinery of government such as the “Robodebt”

scandal in Australia in which one or more algorithms were used to raise and

pursue what were alleged to be debts incurred by recipients of government

transfer payments. Despite those in charge

of the scheme and relevant cabinet ministers being informed the algorithm was

flawed and there had been suicides among those wrongly accused, the politicians

did nothing to intervene until forced by various legal actions. While defending Robodebt, the politicians

found it very handy essentially to disavow connection with the processes which

were attributed to the algorithm.

The

feeds generated by Instagram, Facebook, X (formerly known as Twitter) and such

are also sometimes described as algorithmocracies in that it’s the algorithm which

determines what content is directed to which user. Activists have raised concerns about the way

the social media algorithms operate, creating “feedback loops” whereby feeds

become increasingly narrow and one-sided in focus, acting only to reinforce opinions

rather than inform. In fairness, that

wasn’t the purpose of the design which was simply to keep the user engaged,

thereby allowing the platform to harvest more the product (the user’s attention)

they sell to consumers (the advertisers).

Everything else is an unintended consequence and an industry joke was

the word “algorithm” was used by tech company CEOs when they didn’t wish to

admit the truth. A general awareness of

that now exists but filter bubbles won’t be going away but what it did produce

were the words algorithmophobe (someone unhappy or resentful about the impact of

algorithms in their life) and algorithmophile (which technically should mean “a

devotee or admirer of algorithms” but is usually applied in the sense of “someone

indifferent to or uninterested in the operations of algorithms”, the latter

represented by the great mass of consumers digitally bludgeoned into a state

of acquiescent insensibility.

Among

nerds, there are also fine distinctions.

There are subalgorithms (sub-algorithm seems not a thing) which is a (potentially

stand-alone) algorithm within a larger one, a concept familiar in many

programming languages as a “sub-routine” although distinct from a remote

procedure call (RPC) which is a subroutine being executed in a different

address space. The polyalgorithm (again

hyphens just not cool) is a set of two or more algorithms (or subalgorithms) with

instructions for choosing which in some way integrated. A very nerdy dispute does exist within

mathematics and computer science around whether an algorithm, at the

definitional level, really does need to be restricted to a finite number of

steps. The argument can eventually

extend to the very possibility of infinity (or types of infinity according to

some) so it really is the preserve of nerds.

In real-world application, a program is an algorithm only if (even

eventually), it stops; it need not have a middle but must have a beginning and

an end.

There

is also the mysterious pseudoalgorithm, something les suspicious than it may first appear. Pseudoalgorithms exist usually

for didactic purposes and will usually interpolate (sometime large) fragments

of a real algorithm bit it may be in a syntax which is not specific to a

particular (or any) programming language, the purpose being illustrative and

explanatory. Intended to be read by

humans rather than a machine, all a pseudoalgorithm has to achieve is clarity

in imparting information, the algorithmic component there only to illustrate

something conceptual rather than be literally executable. The pseudoalgorithm model is common in

universities and textbooks and can be simplified because millions of years of evolution mean humans can do their own error correction on the fly.

Of the algorithmic

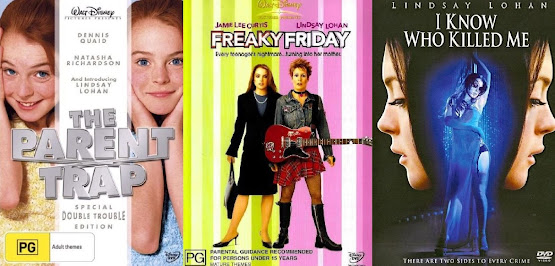

The adjective algorithmic has also emerged as an encapsulated criticism, applied to everything from restaurant menus, coffee shop décor, choices of typefaces and background music. An entire ecosystem (Instagram, TikTok etc) has been suggested as the reason for this multi-culture standardization in which a certain “look, sound or feel” becomes “commoditised by acclamation” as the “standard model” of whatever is being discussed. That critique has by some been dismissed as something reflective of the exclusivity of the pattern of consumption by those who form theories about what seem not very important matters; it’s just they only go to the best coffee shops in the nicest parts of town. In popular culture though the effect of the algorithmic is widespread, entrenched and well-understood and already the AI bots are using algorithms to write music will be popular, needing (for now) only human performers. Some algorithms have become well-known such as the “Netflix algorithm” which presumably doesn’t exist as a conventional algorithm might but is understood as the sets of conventions, plotlines, casts and themes which producers know will have the greatest appeal to the platform. The idea is nothing new; for decades hopeful authors who sent manuscripts to Mills & Boon would receive one of the more gentle rejection slips, telling them their work was very good but “not a Mills & Boon book”. To help, the letter would include a brochure which was essentially a “how to write a Mills & Boon book” guide and it included a summary of the acceptable plot lines of which there were at one point reputedly some two dozen. The “Netflix algorithm” was referenced when Falling for Christmas, the first fruits of Lindsay Lohan’s three film deal with the platform was released in 2022. It was an example of followed a blending of several genres (redemption, Christmas movie, happy ending etc) and the upcoming second film (Irish Wish) is of the “…always a bridesmaid, never a bride — unless, of course, your best friend gets engaged to the love of your life, you make a spontaneous wish for true love, and then magically wake up as the bride-to-be.” school; plenty of familiar elements there so it’ll be interesting to see if the algorithm was well-tuned.

Some algorithms have become famous and others can be said even to have attained a degree of infamy, notably those used by the search engines, social media platforms and such, the Google and TikTok algorithms much debated by those concerned by their consequences. There is though an algorithm remembered as a footnote in the history of linguistic oddities and that is the Cox–Zucker machine, published in 1979 by Dr David Cox (b 1948) and Dr Steven Zucker (1949–2019). The Cox–Zucker machine (which may be called the CZM in polite company) is used in arithmetic geometry and provides a solution to one of the many arcane questions which only those in the field understand but the title of the paper in which it first appeared (Intersection numbers of sections of elliptic surfaces) gives something of a hint. Apparently it wasn’t formerly dubbed the Cox–Zucker machine until 1984 but, impressed by the phonetic possibilities, the pair had been planning joint publication of something as long ago as 1970 and undergraduate humor can’t be blamed because they met as graduate students at Princeton University. The convention in academic publishing is for authors’ surnames to appear in alphabetical order and the temptation proved irresistible.